Training has two distinct aspects to it. First, there’s the design and delivery of the course. And then there’s the impact and impression it has on learners. The two aren’t mutually exclusive, of course. One has a direct effect on the other. And getting training feedback to find out how your course was received, is an important step towards improving the design and delivery for future learners.

Collecting learner feedback has other benefits too. It’s a powerful motivator. It gives employees a sense of engagement and ownership in the process. And it shows commitment to a culture of continued learning.

There’s little doubt that collecting training feedback is important. We know this. We know you know this. It’s how you collect your feedback that’s often less straightforward.

Collecting learner feedback: The challenges

Finding an effective way of distributing and collecting post-training evaluation comes with challenges. The good news is there’s a simple way to conquer those obstacles.

But before we share the details, let’s take a quick look at what you might be up against.

- Time: The process of collecting learner feedback can be resource-intensive. If you’re managing your training feedback manually, distributing, collating, and keeping track of all the responses takes time.

- Confidentiality: Sourcing honest, open answers isn’t easy. Protecting training feedback anonymity can be difficult (or be perceived as being difficult) if you’re using emails, handouts, or including feedback Q&As at the end of live training sessions to source and record responses.

- UX design: Poorly designed post-training surveys put people off. Learners won’t take part or fully engage in post-training evaluation if your survey is difficult to access or navigate.

- Complexity: Some questions or topics can be off-putting, too. If they’re poorly worded, complex, or require a lot of effort to complete, there’s a big chance your learners simply won’t bother to respond.

- Analysis: Collating and analyzing feedback requires a structured and consistent approach. If there’s too much free text information, conflicting formats, or fixed values that haven’t been properly considered, evaluating data can prove difficult. And your analysis won’t be reliable.

Collecting learner feedback: The solution

OK. We’ve covered some of the main challenges. And, while it’s important not to underestimate them, if you’re using TalentLMS there’s no need to worry.

Why? Because you’ve got everything you need to create effective, accessible, accurate, and actionable training feedback surveys in minutes. All from the same platform you use to create your training courses.

Create your survey using a combination of question types

Feedback is most valuable when it combines both qualitative (textual or descriptive) and quantitative (numerical) data.

Using TalentLMS, it’s easy to produce both. Just choose from the following different question types when building your survey:

- Multiple choice: A form of objective assessment, use this option if you want learners to select answers from a list of choices. It’s best to use this type when the potential answers are specific. An example of this might be: “What was the most difficult part of this course? a. the final assignment, b. attending the live sessions, c. going through the theoretical sessions.”

- Free text: Here, learners insert their answers as text. This type of question is helpful when you want to get qualitative feedback, and don’t want to limit learners by providing specific options in advance. For example: “What do you think would make this course more helpful?”

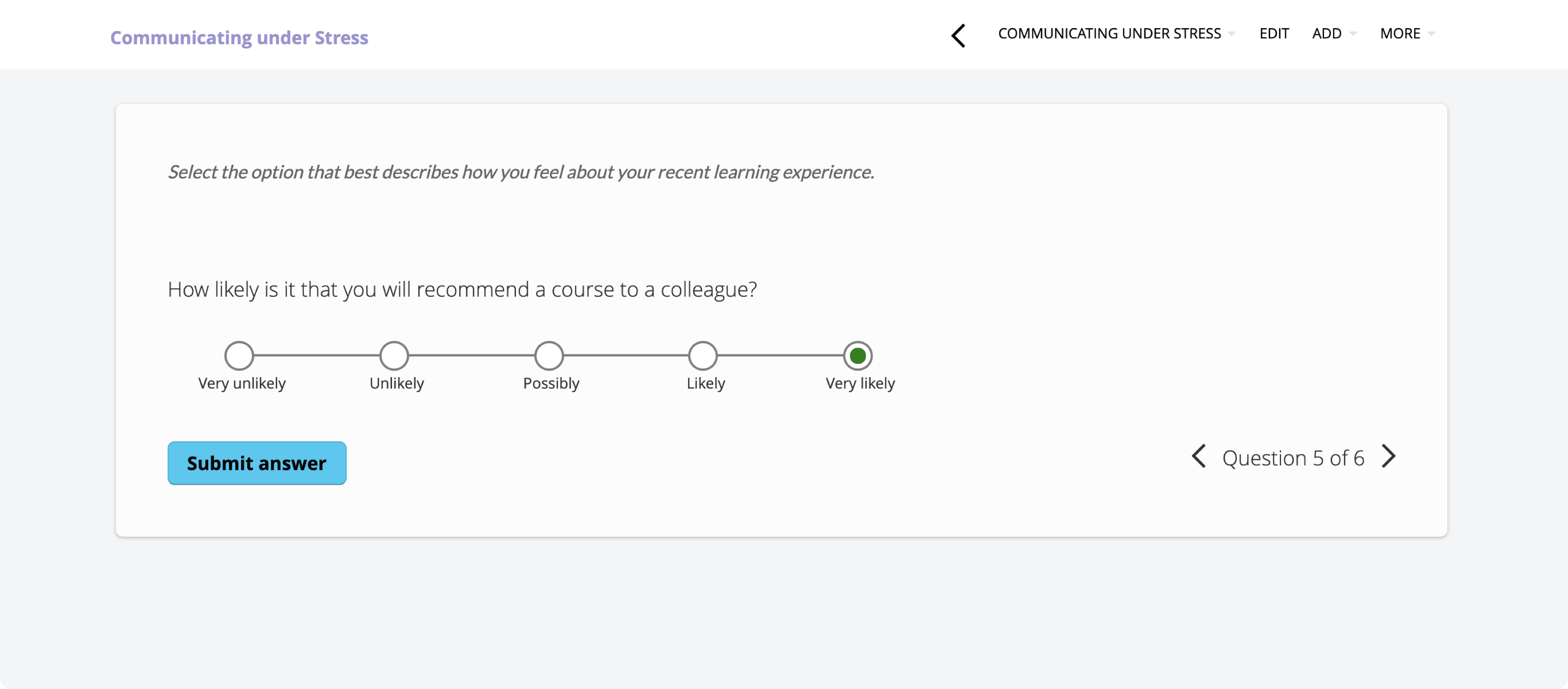

- Likert scale: This provides learners with a form of a rating scale that measures agreement, satisfaction, frequency, likelihood, quality, and more. Based on a question or statement, learners select the degree of their opinion such as: “How likely are you to recommend this course? a. very likely, b. likely, c. possibly, d. unlikely, e. very unlikely.”

To gather truly comprehensive and insightful training feedback, it’s best to include a mix of all three question types in your post-training evaluation survey. And the beauty of doing this through TalentLMS is it couldn’t be easier or quicker.

There are just two simple steps required to create a multifaceted and effective survey:

Step 1.

Create your survey. Just assign it to a course and give it a name.

Step 2.

Add your questions. For each one, choose one of three question types (multiple choice, free text, or Likert). And then assign the possible answers.

Done.

Learn more about how to work with surveys in TalentLMS.

Find out whether your training meets its goals

Set up a training survey in just a few clicks with TalentLMS.

The training platform that users consistently rank #1.

Collecting training feedback: FAQs and best practices

You’ve learned how to build a post-training evaluation survey with TalentLMS. Now it’s time to put that in action. Here are some questions that may arise during that process, along with tips that will help you get more accurate and meaningful feedback from your learners.

- What’s the Likert scale?

- Does TalentLMS support Likert scale questions?

- When should I gather training feedback?

- What kind of training feedback should I collect?

1. What’s the Likert scale?

Multiple choice and free text question types are pretty common and self-explanatory—the clue’s in the name. But, while you’ve probably answered a Likert-type question before, chances are you might not have known that’s what it was.

So here’s a bit of background.

Named after social scientist Rensis Likert, the Likert scale is considered one of the most reliable ways to measure attitude, opinions, and reported behavior.

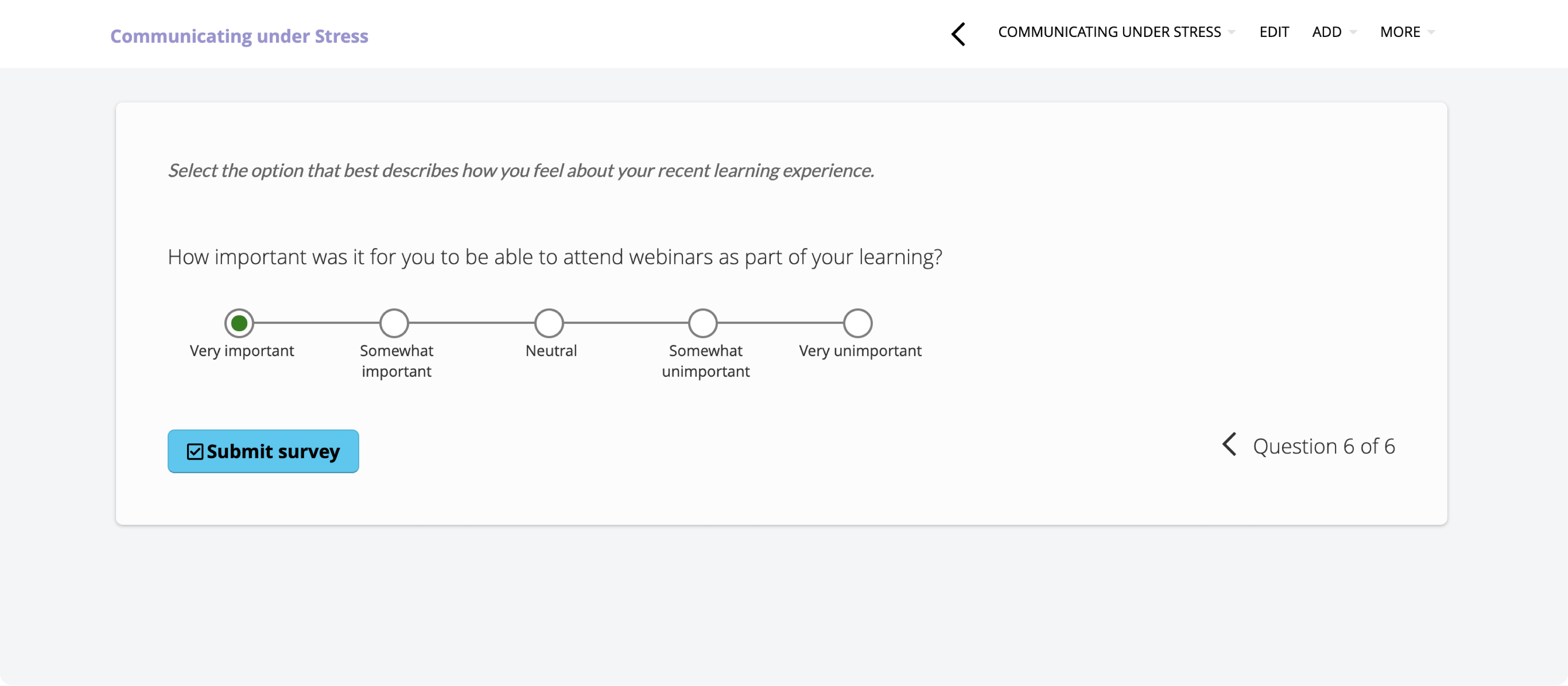

Sometimes referred to as a satisfaction scale, the Likert scales usually offer a range of three to seven (but usually five) pre-defined opinion points. These opinion points include extreme high to low options, with one neutral option in the middle.

Likert scale questions are popular because they can make complex opinions on specific topics easy to analyze and understand. Sourcing degrees of opinion (rather than binary “yes” or “no” answers) also gives admins a deeper understanding and richer picture of the feedback they’ve obtained. This, in turn, helps identify which areas of training need to be improved.

2. Does TalentLMS support Likert scale questions?

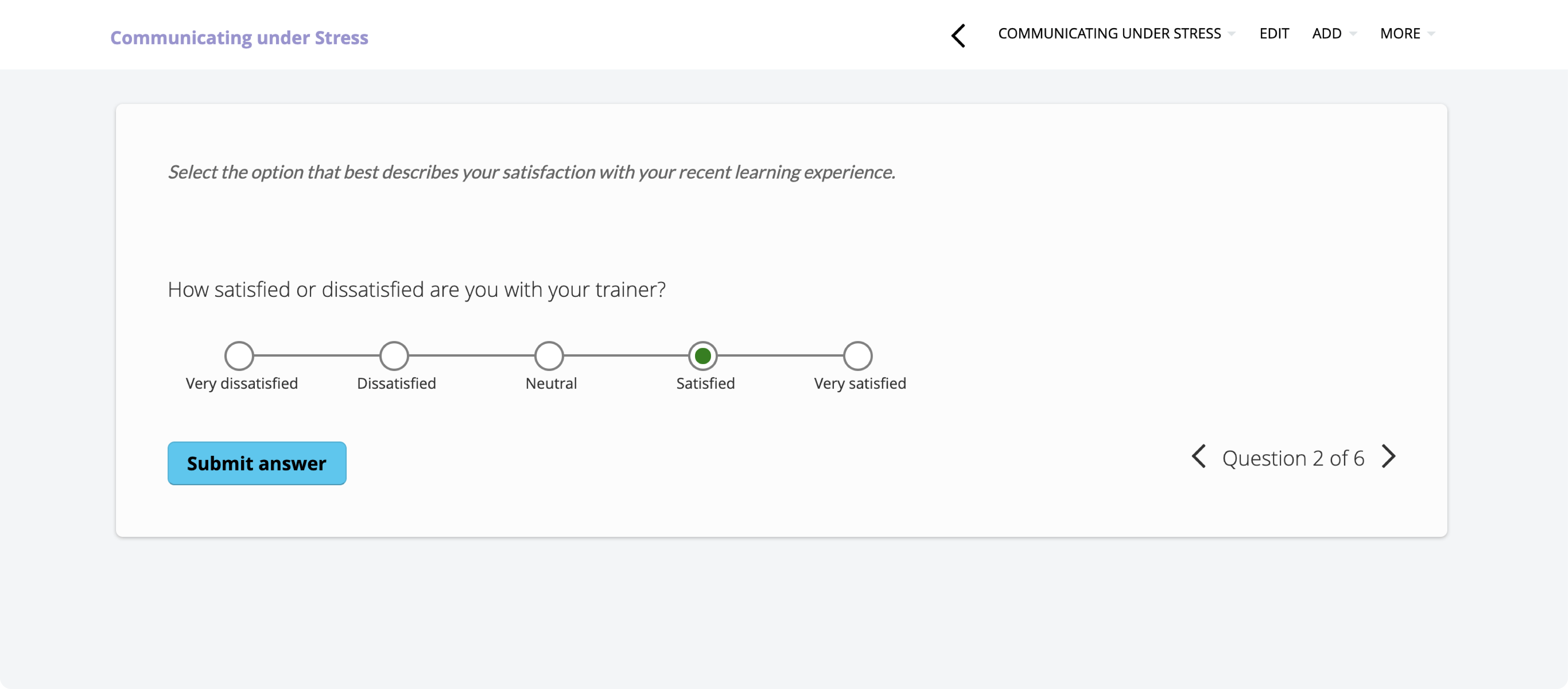

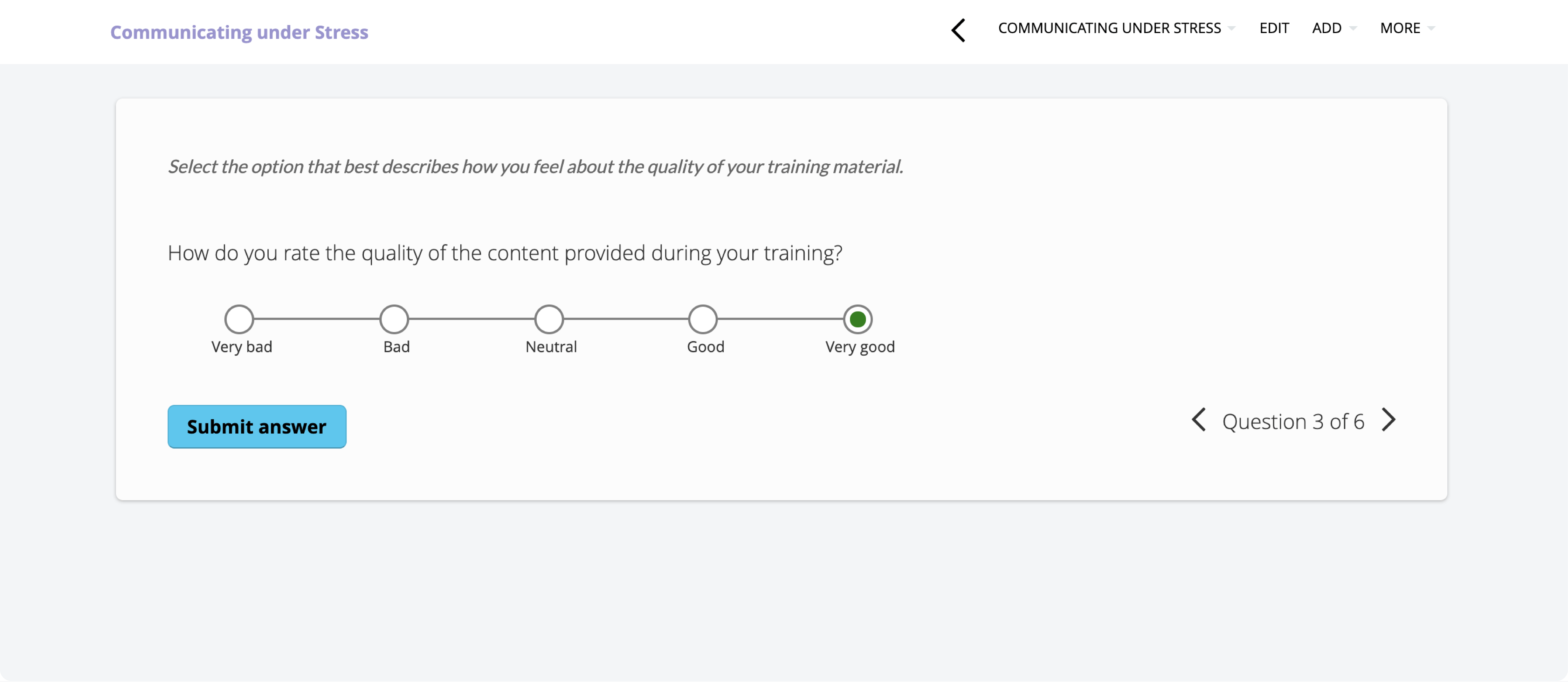

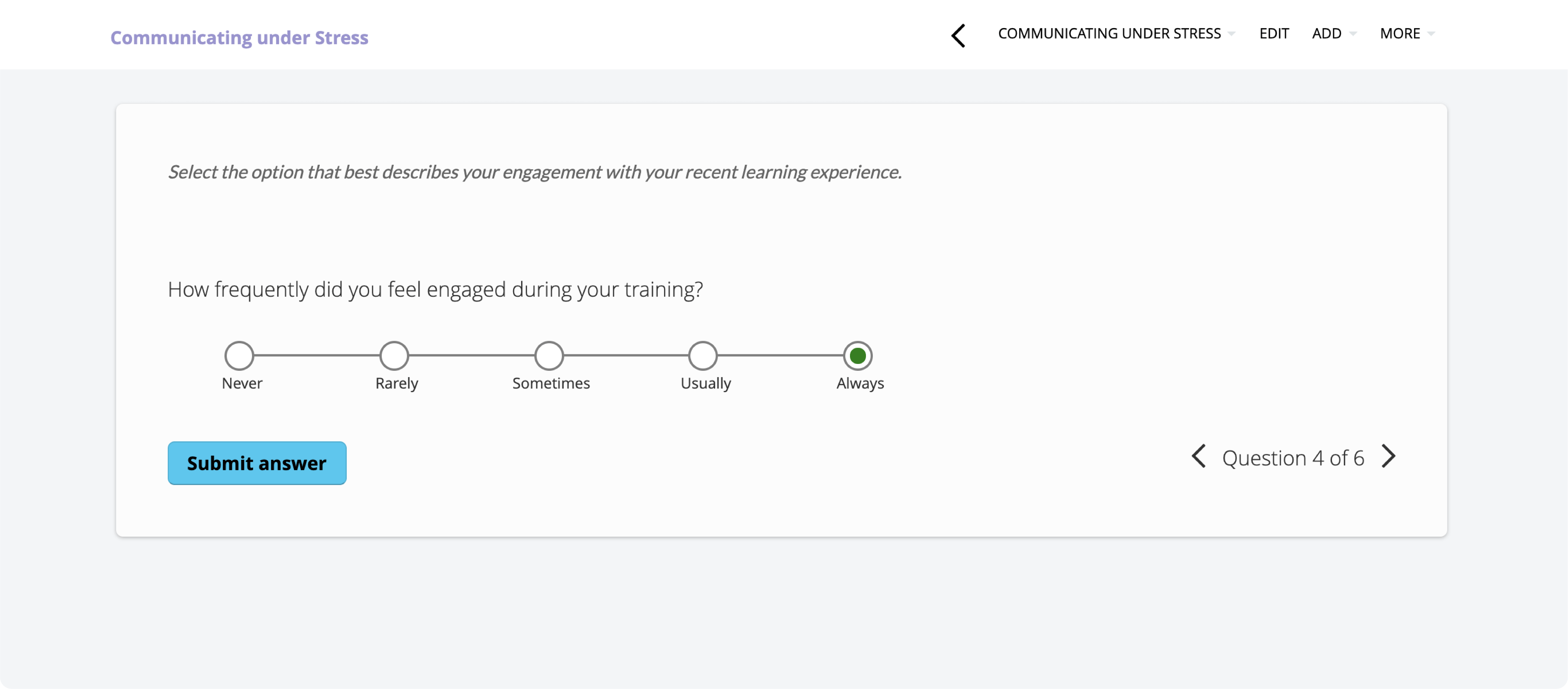

To help you produce Likert scale questions in an instant, TalentLMS has done all of the prep work for you. There are five different scales all set up and ready to choose from. These cover: level of agreement, level of satisfaction, level of quality, likelihood, and frequency. And each of these five scales has five pre-configured responses already assigned to it. Or, if you prefer a bit more control and creativity, you can create your custom responses by choosing a “custom scale.”

Either way, it’s simple and straightforward. All you need to do is:

- Provide a description. (A line of text that offers a bit of context or guidance for your user.)

For example, “Select the option that best describes your recent learning experience.“ - Add a question or statement that relates to this.

For example, “How satisfied were you with your trainer?” or “I found the topic of the presentation interesting.“ - Assign a response type.

Choose one of the following five scale types with pre-figured responses, or create your custom responses:

Level of Agreement

Level of Satisfaction

Level of Quality

Frequency

Likelihood

Custom responses

3. When should I gather training feedback?

Training starts as soon as you make a new hire and continues all the way throughout the employee lifecycle. Which is why it’s important to gather feedback at all of the different learning and development stages. Starting with onboarding.

Onboarding training is particularly important because it sets the tone for your company’s ongoing learning and development programs. Get it wrong and your new hire is twice as likely to move on before they’ve even really moved in.

So, whether it’s LMS training, organizational alignment training, or some initial job-related training, a well-designed onboarding survey will give you the insights you need to improve your offering. And hold onto your new hires.

But don’t skip feedback during other training sessions, as well. Whether you’re hosting a workshop for your sales team, organizing leadership training for your managers, or giving a company-wide presentation on cybersecurity best practices, post-training feedback will help gauge participants’ opinions and improve your future L&D programs.

Poll new and existing employees with TalentLMS

Learn how they feel about their training with a simple survey.

Easy to set up, easy to use, easy to customize.

4. What kind of training feedback should I collect?

Generally speaking, post-training evaluation measures the effectiveness of a training program—from activities learners most enjoyed and those they struggled with, to how much they learned. And this, in turn, helps pinpoint areas for improvement.

But there are lots of different components that work together to form a training program. And gathering specific feedback for each of these different elements will deliver more specific and actionable insights.

Stuck on ideas? If you’re wondering what post-training evaluation survey topics and questions to ask, here are some to consider:

- Before the course: Pre-training preparation, goals, and objectives.

- Course structure: Structure and flow, assessments and engagement.

- Content: Quality, variety, language, detail, or level of challenge.

- Delivery: Interactivity, connection, engagement.

- Duration: Course, assignments, and tests.

- The trainer: Empathy, expertise, communication skills.

- UX/UI design: Interface, navigation, layout.

- Technical issues: Bugs, broken links, functionality.

- Environment: Distractions, where they were, what device they used (mobile, PC).

- Accessibility: Audio guides, font, volume.

- Outcome: Goals, expectations, recommendations.

Training feedback that drives improvements

Simply gathering training feedback isn’t enough. You need to be able to accurately analyze that feedback, produce specific and actionable insights from it, and have the time to do so. Which is where TalentLMS’s built-in survey functionality comes in.

Create structured and consistently formatted surveys in minutes. Generate detailed reports just as quickly. Cover complex topics in an accessible and easy-to-evaluate way. Produce a smooth, seamless, and confidential user experience that raises response rates.

And, with everything automated and all of the prep-work done for you, focus your time where it matters—evaluating your findings and fine-tuning your courses so they’re better every time you run them.